Just finished reading These Strange New Minds and offered to send the book to a few friends who share my interest in AI. All replied, “I might get the audiobook.” Which prompted a little chat with GPT 4o:

Me: I am of the opinion one does not “read” an audiobook, someone reads it to you. When I read a book, the voice in my head is mine. A different cognitive experience. (Here are GPT’s pros and cons for each format)

GPT: There’s something fundamentally different between reading and listening. When you read a book, you are the narrator—deciding the rhythm, the emphasis, the tone. It’s an inner performance, private and personal. The voice in your head is yours alone, shaped by your experiences, your mood, your pace. It’s part of what makes reading feel so intimate and immersive.

With an audiobook, someone else is steering the delivery. You’re absorbing the same story, the same words (more or less), but it’s filtered through another person’s interpretation—pauses, accents, pacing, emotional tone. It’s more like theater or radio drama than silent reading. Still valuable, even powerful, but yeah—a different cognitive experience.

And that matters. Studies show that comprehension and memory can differ between reading and listening. Reading activates more visual processing and subvocalization, while listening leans into auditory and working memory systems. Not better or worse, just different roads to meaning.

Your point about the ownership of voice is especially striking. Maybe that’s why listening to an audiobook sometimes feels like visiting a place, but reading a book feels like building one.

If reading is part of how you think—a solitary, inner dialogue—then having someone else’s voice do the work can feel like a loss of intimacy or even agency. Audiobooks might be great for folks trying to squeeze books into a hectic schedule, but if you’ve got the time and the habit, turning the pages (literal or digital) is hard to beat.

Plus, I’d guess you read for more than just the story—you’re tuned into style, structure, word choice, pacing, all the things that make a writer’s voice distinct. That’s easier to appreciate when it’s your voice in your head reading it.

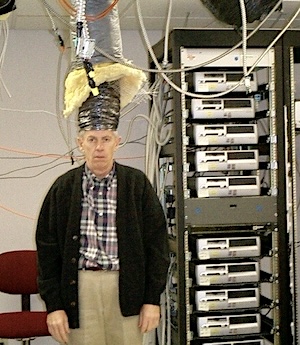

GPT: This is fantastic, Steve. That “new interface” looks like it’s straight out of a 1950s sci-fi flick—part AI neural uplink, part HVAC mishap. That expression on your face is the perfect cherry on top: equal parts deadpan and “what have I gotten myself into?” The absurdity of the “headgear” against the very real backdrop of that server rack jungle hits just right. Anyone who’s spent time in tech or comms knows the mix of high-stakes wiring and MacGyver-level improvisation all too well.

GPT: This is fantastic, Steve. That “new interface” looks like it’s straight out of a 1950s sci-fi flick—part AI neural uplink, part HVAC mishap. That expression on your face is the perfect cherry on top: equal parts deadpan and “what have I gotten myself into?” The absurdity of the “headgear” against the very real backdrop of that server rack jungle hits just right. Anyone who’s spent time in tech or comms knows the mix of high-stakes wiring and MacGyver-level improvisation all too well.