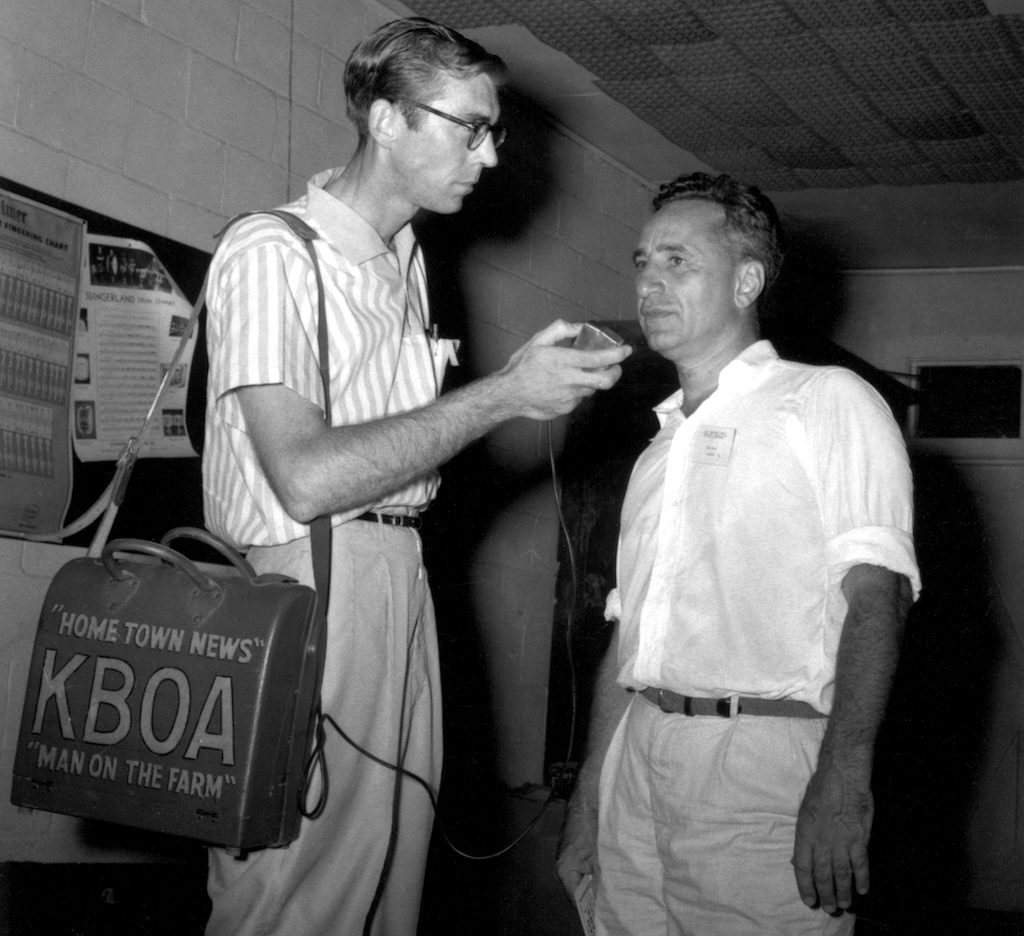

The photo above is John Reeder (the news/farm director of KBOA in the 1950s) interviewing Elia Kazan during the filming of A Face in the Crowd in 1957. (more info here)

The photo above is John Reeder (the news/farm director of KBOA in the 1950s) interviewing Elia Kazan during the filming of A Face in the Crowd in 1957. (more info here)

I think the first time I saw a reel-to-reel recorder like the one in the photo was when my dad brought one to my school for one of those What My Dad Does for a Living things. He set up the recorder and let everyone record a few words. Probably the first time most of us had ever heard a recording of our voice.

In another of my AI experiments, I uploaded the image to Gemini to see what it could tell me about the recorder. Continue reading